As technology continues to evolve and become more advanced, the demand for high-speed cameras is growing rapidly. The availability of more powerful processors and advancements in camera technology have allowed embedded cameras to achieve higher frame rates than ever before. This has enabled them to capture high-quality image and video data, making them ideal for a wide range of applications in industries such as manufacturing, smart city, industrial, healthcare, and sports.

While not every application requires a high frame rate or high-speed camera, understanding the frame rate required for a particular application is critical in embedded vision. Higher frame rates also require more processing power since it involves transferring more data within a given time frame.

In this article, we will explore what frame rate is, related concepts, and the importance of high frame rates in embedded vision. Whether you’re an engineer, developer, or simply curious about this topic, this article will provide you with a comprehensive understanding of the role of high-speed cameras in modern-day embedded vision applications.

What are Resolution and Frame Rate?

The number of pixels that can be recorded in an image by a camera is referred to as its resolution. The greater the resolution, the more pixels are collected, resulting in a more detailed and sharper image. The number of horizontal and vertical pixels is commonly used to indicate resolution. For example, a Full HD image would be 1920 × 1080 pixels.

Resolution is also commonly expressed in terms of the total number of pixels in an image, in megapixels – which is obtained by multiplying the number of horizontal pixel rows and vertical pixel columns. For example, in the case of a Full HD camera, the resolution rounded off to the closest million is 2, which means that the resolution is 2MP.

In contrast, frame rate refers to the number of frames that a camera can acquire in one second. It is usually expressed in frames per second (fps).

Higher frame rates result in smoother and more fluid video movements. A video with a frame rate of 30 frames per second will display 30 still images per second, resulting in a smooth video. However, a video with a frame rate of 60 fps will appear more smooth.

Resolution and frame rate limit each other. The total video bandwidth is roughly calculated by multiplying the resolution (in pixels per frame) by the frame rate (in frames per second). As the resolution increases, so does the amount of data that must be processed. This can place a load on the host processor’s bandwidth, restricting the frame rate. Additionally, as the frame rate increases, the quantity of data that must be processed and communicated in a given period also increases, thus limiting the resolution that can be obtained.

As a result, there is a trade-off between image quality and speed when choosing a camera’s resolution and frame rate. High frame rates, which are crucial in applications like sports analytics, robotics, and traffic monitoring, may not be possible at an extremely high resolution. So, the key is in finding a balance between the two parameters.

The decision about resolution and frame rate ultimately comes down to the requirements of the individual applications, as well as the system’s available bandwidth and computing capability. It is recommended to speak to a camera consultant before you go ahead and finalize a resolution and frame rate for your application.

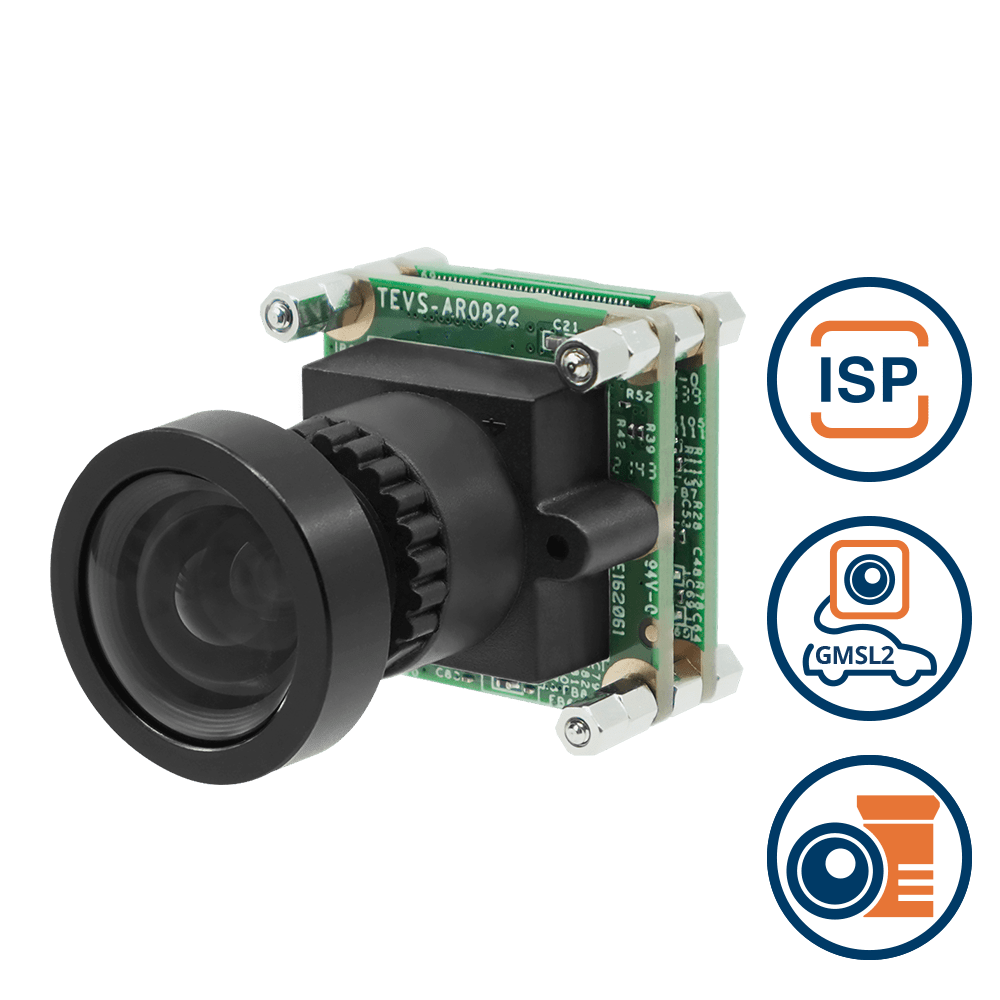

USB3 Type-C Enclosed Camera with onsemi AR0822 8MP 4K Rolling Shutter with Onboard ISP + IR-Cut Filter + Incl. 1 Meter USB C Cable with C Mount Body

VCS-AR0822-CB

- onsemi AR0822 8MP Rolling Shutter Sensor

- 4K HDR Imaging Capabilities

- Near Infra-Red Enhancement for Outdoor Applications

- Designed for Low Light Applications

- C-Mount for Interchangeable Lenses

- UVC USB Type-C 5 Gbps Connector

- Plug & Play with Windows & Linux OS

- VizionViewer™ configuration utility

- VizionSDK for custom development

| Sensor | onsemi AR0822 |

| Shutter | Rolling |

| Megapixels | 8MP |

| Chromaticity | Color, Monochrome |

| Interface | USB3 |

What is a High Frame Rate Camera?

There is no standard definition or threshold for what is considered “high” in terms of frame rate. In embedded vision, even 30 fps might be considered high at a resolution of 4K and above. On the other hand, for Full HD, 60 to 120 fps is considered high.

When it comes to machine vision, it is usually achieved by compromising on the resolution by reducing the ROI (Region Of Interest). It might also require you to modify parameters like the chroma of the image (for example by using the raw Bayer format instead of color) and bit depth (number of bits/pixel component).

Applications of High Frame Rate Cameras in Embedded Vision

Next, let us look at a few embedded vision applications where high frame rate cameras are used.

High-frame rate cameras are used in sports analytics to capture fast-moving athletes in action, such as a baseball pitcher throwing a pitch or a sprinter crossing the finish line. Cameras are also used for ball tracking. The ball tracking data is then used to analyze player performance and conduct match analyses with the help of a computer vision algorithm.

In robotics, high frame rate cameras are used in robotic arms or other types of robots including cleaning robots, inventory robots, and pick and place robots. This allows robots to:

- Capture barcodes and other objects while they are on the move.

- Take images of objects in motion for picking and placing, machine tending, product sorting, etc.

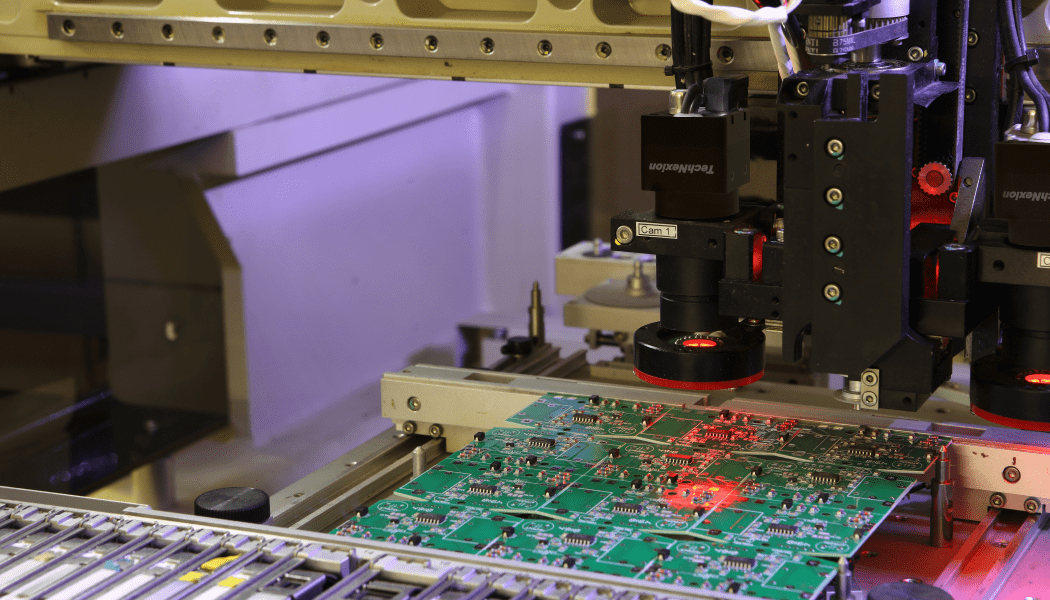

In industrial inspection, high frame rate cameras are used to capture images of high-speed manufacturing processes, such as the assembly of electronic components or the inspection of products on a conveyor belt.

Why is a High Frame Rate Camera Required in Embedded Vision?

As we discussed, in an embedded vision system, a high frame rate camera is required to capture fast-moving objects or events that would otherwise appear blurred or distorted at a lower frame rate. The higher the frame rate, the higher the number of images captured per second, and therefore the less likely that fast-moving objects will appear blurred.

A key factor in achieving a high frame rate is also exposure time, which is the duration of time in which the pixels of the sensor are “exposed” to the incoming optical field. The longer the exposure time, the more blurry an image will appear if objects are moving rapidly in front of the sensor.

To understand this better, let’s take the example of a moving car. If we capture its image at a low frame rate, say 10 fps, the image will appear blurry because the car would have moved a significant distance during the interval between each frame capture or the exposure time.

In the above image, you can see the relative difference in motion blur between the car and the cycle. This is because the higher the speed, the higher will be the extent of blur (the FOV or Field Of View matters too). However, if we capture the same car with a high frame rate camera, say 120 fps, the images captured would be more frequent, and we would be able to see the car more clearly.

Another example is a fan blade, which rotates at high speed. If we capture an image of a fan blade at a low frame rate, the blade may appear to be stationary or blurred. However, if we capture the same blade with a high frame rate camera, the blade will appear more clearly defined with minimal blur. Similarly, turbines and wheels are also fast-moving objects that require high frame rate cameras to capture their motion accurately.

What Determines the Frame Rate in Embedded Cameras?

In an embedded camera, several factors determine the maximum frame rate that can be achieved. These include the resolution of the sensor, the capacity of the host processor, the quantum efficiency (QE) of the sensor, the bandwidth of the interface, the chroma and bit depth of the sensor, and the number of active pixels.

Resolution

The resolution of the sensor plays a critical role in determining the maximum frame rate that can be achieved. As mentioned earlier, if a camera has a high-resolution sensor, the maximum frame rate that it can achieve will be lower than that of a camera with a lower-resolution sensor. For example, if a 4K camera can achieve a maximum frame rate of 30 fps at full resolution, the same camera operating at Full HD resolution can achieve a much higher frame rate.

Sensor Quantum Efficiency

The more efficient a sensor is at converting light energy to charge is called quantum efficiency (QE). A sensor with a higher QE will be able to capture an image with a shorter exposure time given a particular lighting condition. Thus, to prevent motion blur in high frame rate applications, sensors with a higher QE will perform better.

Host Processor Capabilities

The capacity of the host processor is another crucial factor that determines the maximum frame rate. The higher the processing power of the host processor, the higher the maximum frame rate that can be achieved. Modern host processing platforms like NVIDIA Jetson come with a very high processing capacity that can handle heavy loads of AI (Artificial Intelligence) operations. The latest processor in the Jetson series, NVIDIA Jetson AGX Orin, has a maximum processing power of 275 TOPS while its predecessor NVIDIA Jetson AGX Xavier has a maximum capacity of 32 TOPS.

Bandwidth of the Interface

The bandwidth of the interface also plays a critical role in determining the maximum frame rate. USB cameras have less bandwidth than MIPI cameras (with four lanes) but more than that GigE cameras. However, the newest SerDes (Serializer-Deserializer) cameras have more bandwidth than USB and MIPI cameras, and offer more robust connections between the host processor and the sensor itself.

Chroma and Bit Depth

Chroma and bit depth also play a crucial role in determining the maximum frame rate. A monochrome camera requires less bandwidth than a color camera, and therefore a higher frame rate can be achieved. Bit depth, which is the number of bits per pixel component, indicates the readout mode. Cameras can come in 8, 12, and 16 bits. The higher the bit depth, the higher the number of colors in the data, and the larger the file size. A large file size means a lower frame rate.

Number of Active Pixels

Finally, the number of active pixels also determines the maximum frame rate. The region of interest (ROI) determines the number of active pixels. The higher the ROI, the higher the number of pixels, and the lower the maximum frame rate you can achieve.

How to Choose the Right Frame Rate for Your Application?

Choosing the right frame rate for your application is a critical decision that can impact the overall performance of your embedded vision system.

The first consideration should be the nature of the object being captured. If the object is fast-moving, it requires a higher frame rate to capture the necessary details without any blur.

The next step is to look at the other factors mentioned in the previous section and determine if any of them is a higher priority than achieving a high frame rate. For example, if bandwidth is limited, you may need to reduce the frame rate to prevent data loss. Similarly, if the host processor’s capacity is limited, you may need to balance the resolution and frame rate to achieve optimal performance.

Once you have fixed the values for these parameters, you can define an appropriate frame rate for your application. It is essential to consider the application’s requirements and balance them against the available resources to achieve the best possible performance.

TechNexion: High Frame Rate Cameras for Embedded Vision

It’s important to note that not every use case requires a high frame rate camera.

We are a leading provider of embedded vision and computing solutions that specializes in designing and manufacturing high-performance and low-power embedded systems for various industries. Our products are widely used in various applications, including robotics, smart cities, smart traffic, and precision farming.

In the field of robotics, our embedded computing solutions are used to power robots for industrial automation, medical applications, and defense systems. For smart cities, our solutions are used to create intelligent traffic systems, public transportation systems, and security systems. In precision farming, they are used for precision agriculture applications such as monitoring crop health, weather conditions, and irrigation systems.

Related Products

- What are Resolution and Frame Rate?

- What is a High Frame Rate Camera?

- Applications of High Frame Rate Cameras in Embedded Vision

- Why is a High Frame Rate Camera Required in Embedded Vision?

- What Determines the Frame Rate in Embedded Cameras?

- Resolution

- Sensor Quantum Efficiency

- Host Processor Capabilities

- Bandwidth of the Interface

- Chroma and Bit Depth

- Number of Active Pixels

- How to Choose the Right Frame Rate for Your Application?

- TechNexion: High Frame Rate Cameras for Embedded Vision

- Related Products

Get a Quote

Fill out the details below and one of our representatives will contact you shortly.